When you need to move the content of one or more datastores, you sometimes stumble upon files that you didn’t know where there. One such type of files are dump files that are stored in a VM’s directory on the datastore.

The files I encountered were named like this:

- vmware64-core*.gz

- vmware-vmx-zdump.*

There isn’t a lot of information available on what exactly these files are used for, besides that they seem to be created when the VM Monitor encounters a crash or a serious problem.

Since these files were quite old, and since I didn’t have any open tickets with VMware, I decided to remove these files. But of course in the PowerCLI way with a function 😉

The Script

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 |

function Get-Dump { <# .SYNOPSIS Finds dump files on datastores .DESCRIPTION The function will look for dump files on one or more datastores. The dump filenames contain "zdump" or "core" .NOTES Author: Luc Dekens .PARAMETER Datastore The datastore(s) the function has to search. .EXAMPLE PS> Get-Dump -Datastore DS1 .EXAMPLE PS> Get-Datastore -Name DS* | Get-Dump #> param( [Parameter(ValueFromPipeline=$true)] [PSObject[]]$Datastore ) begin { $spec = New-Object VMware.Vim.HostDatastoreBrowserSearchSpec $spec.MatchPattern = "*zdump*","*core*" $spec.details = New-Object VMware.Vim.FileQueryFlags $spec.details.fileType = $true $spec.details.fileSize = $true $spec.details.modification = $true $spec.details.fileOwner = $true $spec.sortFoldersFirst = $true } process { if(!$Datastore){ $Datastore = Get-Datastore } else{ $Datastore | %{ $Datastore = if($_ -is [System.String]){ Get-Datastore -Name $_ } else {$_} } } $Datastore | %{ $dsBrowser = Get-View $_.ExtensionData.Browser $task = $dsBrowser.SearchDatastoreSubFolders("[" + $_.Name + "] ",$spec) $task | Where {$_.File} | Select -Property FolderPath -ExpandProperty File | where {$_ -isnot "VMware.Vim.FolderFileInfo"} | Select Path,Modification,FileSize,FolderPath } } } function Remove-Dump { <# .SYNOPSIS Removes files on datastores .DESCRIPTION The function removes files on datastores. The filenames need to be provided as objects returned by the Get-Dump function. .NOTES Author: Luc Dekens .PARAMETER File The file(s) to remove .EXAMPLE PS> Get-Dump -Datastore DS1 | Remove-Dump .EXAMPLE PS> Get-Datastore -Name DS* | Remove-Dump -Confirm:$false #> [cmdletBinding(SupportsShouldProcess=$true,ConfirmImpact='High')] param( [Parameter(ValueFromPipeline=$true)] [PSObject[]]$File ) begin { $fileMgr = Get-View FileManager } process { $File | %{ $dsName = $_.FolderPath.Split(' ')[0].TrimStart('[').TrimEnd(']') $dc = (Get-Datastore -Name $dsName).Datacenter.ExtensionData.MoRef $name = $_.FolderPath + $_.Path if($pscmdlet.ShouldProcess($dsName,"Deleting file $name")){ $fileMgr.DeleteDatastoreFile($name,$dc) } } } } |

Annotations

Line 18: The function accepts one or more datastores. The datastores can be passed as datastore objects that are returned by the Get-Datastore cmdlet, or they can be passed as datastorenames.

Line 22-29: The HostDatastoreBrowserSearchSpec that is used to find the dump files. In the MatchPattern property the function defines the name patterns that should be looked for. Since this specification always is the same, we define it in the Begin section of the function.

Line 32-42: The function accepts datastore objects or datastore names, these lines take care of converting datastore names to datastore objects. If no Datastore value was passed, the function will search all the datastores.

Line 45: The function uses the SearchDatastoreSubFolders to find the dump files. The same logic could also be done through the PowerCLI datastore provider. But my tests showed that the SearchDatastoreSubFolders method is 20 times faster !

Line 48: The function returns object that have the basic information about the file; the name, the path, the modification time and the size.

Line 68: The Remove-Dump function supports the WhatIf and Confirm parameters.

Line 75: The FileManager is preferred over the HostDatastoreBrowser to remove files.

Line 80-81: Since the function provides the filepath as “[datastore] folder/file“, it has to pass the datacenter MoRef to the DeleteDatastoreFile method.

Line 83: This handles the use of the WhatIf parameter.

Sample Usage

The functions are very simple to use. To look for dump files on a specific datastore, you can do

|

1 2 |

$DS = Get-Datastore -Name ds1 Get-Dump -Datastore $DS |

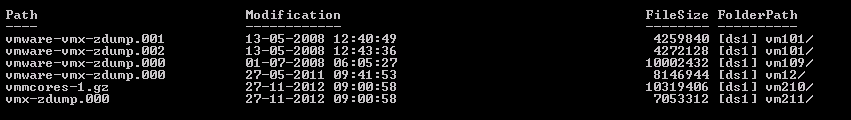

This will, provided there are dump files present on that datastore, return an object for each dump file. The output on the console looks something like this.

The remove the dump files you can just place the returned objects on the pipeline and call the Remove-Dump function.

|

1 |

Get-Datastore -Name ds* | Get-Dump | Remove-Dump -Confirm:$false |

You can of course remove files a bit more intelligently. You could for example remove all dump files that are more than 1 year old.

|

1 |

Get-Dump | Where {$_.Modification -lt $date} | Remove-Dump -Confirm:$false |

Note that the Modifcation property holds the UTC time.

Enjoy !

Chad

Hey Luc, I re-purposed this script to help find vms powered off for more than 30 days where the events showing the power off are no longer present due to the vcenter db being purged of such items long ago. We have a ton of powered off vms. I am running into a block trying to match datastore vm folder names back to vms. The VC in question uses a home grown automation that isn’t very tidy. It creates and destroys vms frequently. Thus sometimes vms folder names don’t match their vm names. So I trying to match the following for example: vmname.example.com_1 to vmname.example.com

The _1 being the folder name. Any idea on how to use the following to search for that in a pipeline using the folder name as input to match after get-dump found it? Hope its easy to understand what I am attempting to do.

$faillist = @()

$vms = $faildellist | ForEach-Object{Try{Get-VM -Name $_ -ErrorAction Stop | Where-Object {$_.PowerState -eq “PoweredOff”}} Catch{$err = $_.Exception.Message

Write-Host “failed > $err”

$fail = $err #| %{$_.Split(“\'”,2)[1];} | %{$_.Split(“\'”,2)[0];}

$faillist += $fail}}

Thanks, Chad